Understanding the Existing Literature - The Statistical/ Counterfactual "Causal Inference Paradigm"

The Origin of Modern Counterfactual Causal Inference - The Potential Outcomes Model

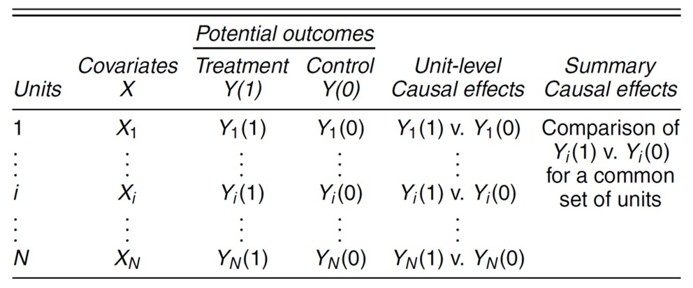

Figure 6.1. A. Rubin’s (2005) description of the Potential Outcomes model and its relationship to counterfactual (cf) causal effect estimands.

The causal inference paradigm, as currently described, is a fusion developed from three sources. The foundational conceptualization, which establishes the most often used basis for the evidential requirements and restrictions, comes from the imagined world created in the statistician D. R. Rubin’s Potential Outcomes Model (Rubin 1972; 2005; Fig. 2A). The potential outcomes (PO) model rests upon a conceptualization of causation that aligns with the statistical estimation of counterfactual causal effects. The PO model generalizes the idealized properties of randomized manipulative experiments so as to extend them to quasi-experimental settings where manipulation and random assignment do not take place. In doing so, it relies on counterfactual comparisons.

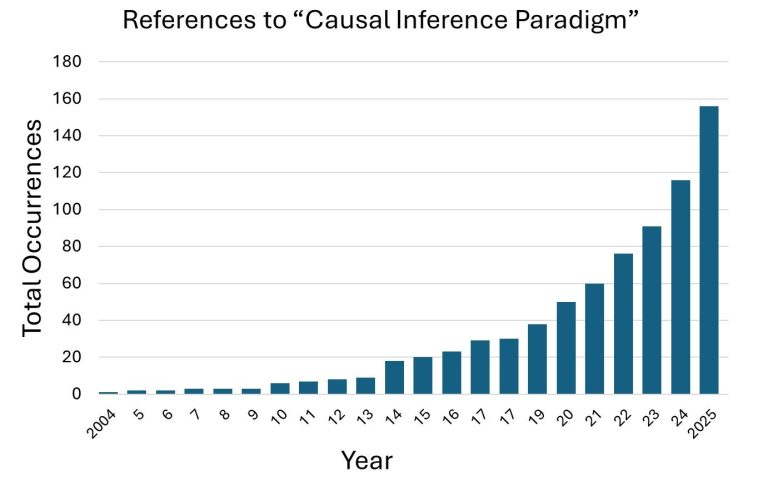

The Emergence of the "Causal Inference Paradigm"

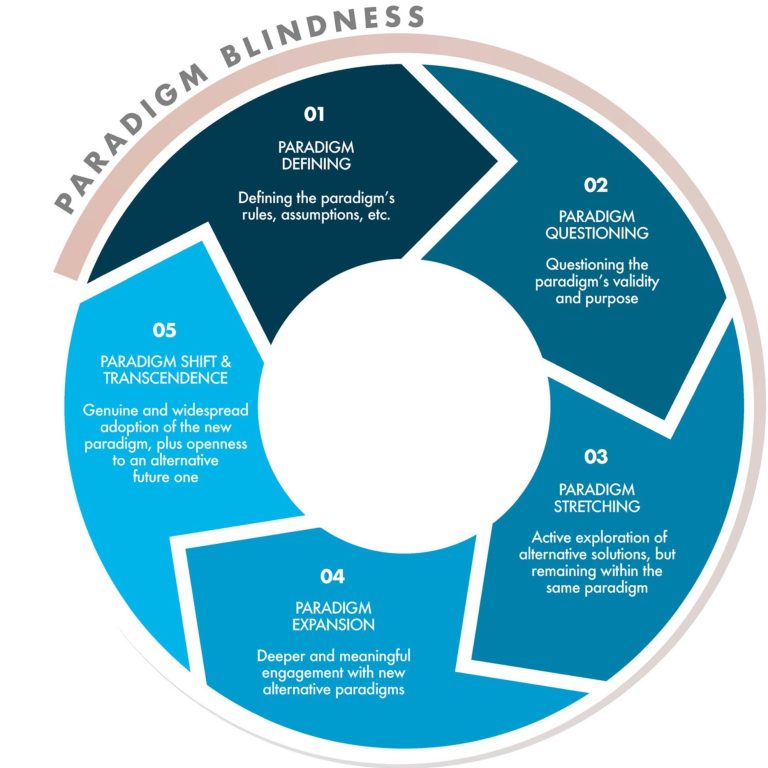

It may well be that many are not familiar with what constitutes a paradigm in the literature, how they form, and what role they play. I have framed my work in terms of paradigms since my first paper (Grace 2024. "An integrative paradigm for building causal knowledge") to my most recent ("Call for a paradigm shift"). My goal in taking this approach is to produce a coherent body of literature where foundational assumptions are made explicit. I urge readers to read the 1-page excerpt attached. The link below to "ParadigmShift" provides a more extensive description.

A description of the "causal inference paradigm can be found in

Wu, et al. Causal inference: a statistical paradigm for inferring causality.

Note most people using causal inference methods don't know they are adhering to a prescribed paradigm and some will think they are, but are deviating from that paradigm (by relying on mechanistic knowledge intuitively).

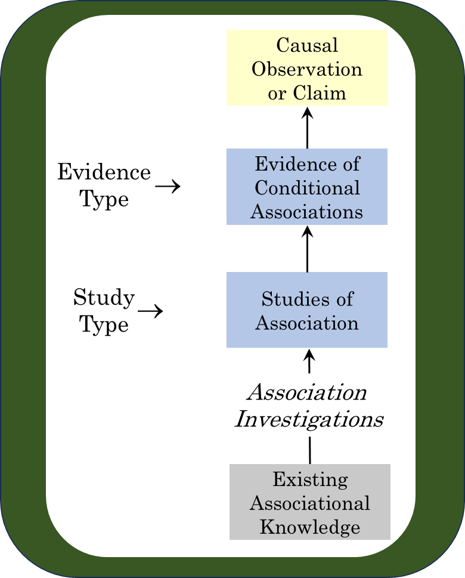

The Current Counterfactual "Causal Inference Model"

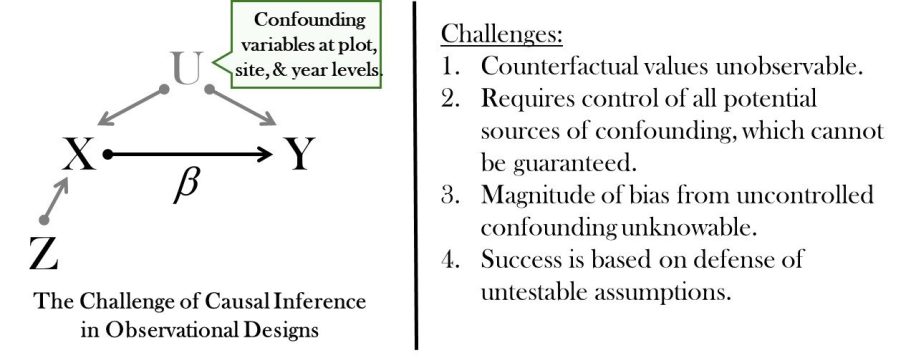

During the past decade the PO framework has come to be commonly paired with the graphical modeling system developed by the computer scientist J. Pearl associated with the Structural Causal Model. The fusion of the two systems has been promoted based on work in the 1990s demonstrating statistical equivalence of the two systems. The graphical modeling system now serves primarily as a user interface for developing strategies for isolating counterfactual effects, but also as a constant reminder of the threat from omitted confounders (Figure 6.3). Finally, a third source contributing to the SCIP is from study designs developed as implementation strategies, such as the use of discontinuities in exposures, analytical methods for exploiting temporal events, and group equalization methods for non-randomized exposures.

Figure 6.2. Representation of the challenge of statistical causal inference in observational data (modified from Dee et al. 2023). The theoretical requirement is to obtain a perfectly unbiased estimate of the effect of X on Y, β. Confounding variables U pose a pervasive threat to that goal that must be addressed. Z refers to an alternative method for bias control using instrumental variables.

Demystifying the Possible Standards for Causal Inference

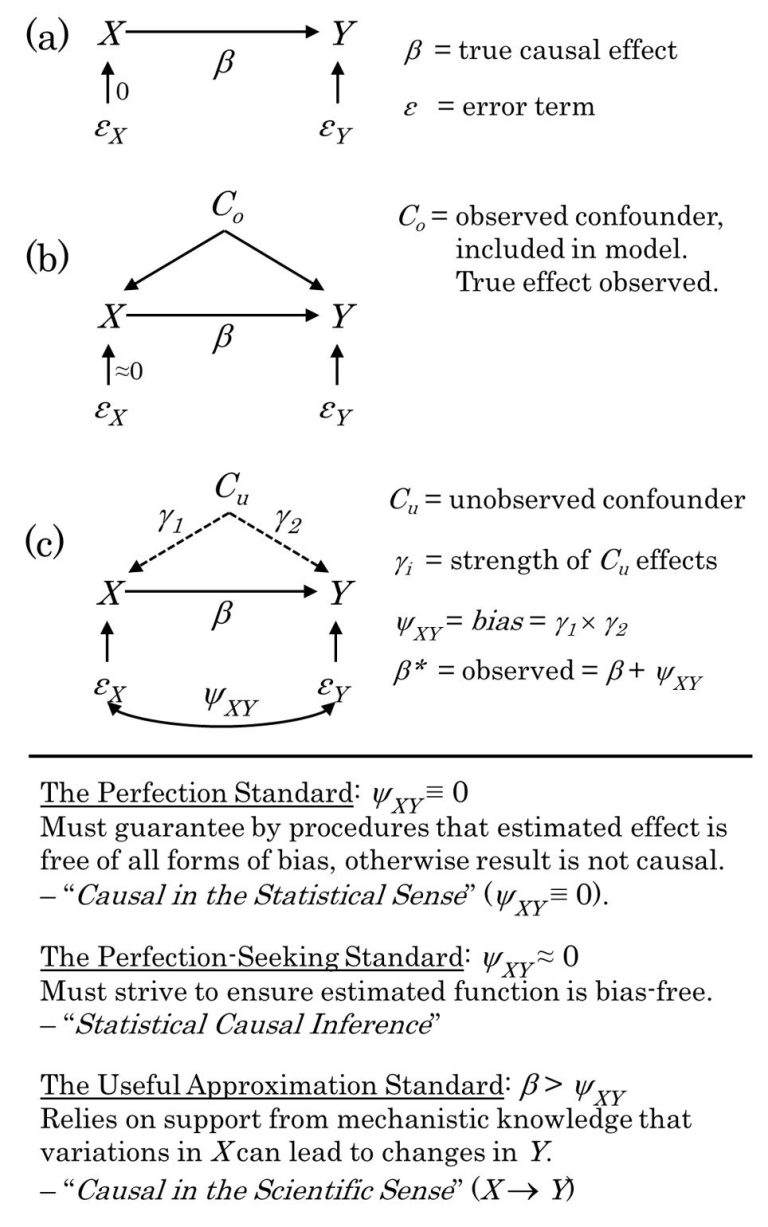

Figure 6.3. Representation of the challenge of estimating from some dataset the causal effect of some variable X on another variable Y (modified from Grace, 2024).

(a) Causal diagram for the case where there are no confounders and no errors in measuring X.

(b) Causal diagram for the case where observed confounders "Co" have been measured and are included in the model and thereby controlled for. Minimal measurement error in X is an additional assumption.

(c) Causal diagram illustrating the case where there exist unobserved confounders "Cu" omitted from the model. In the lower portion of the figure, three contrasting evidential standards are defined.

The so-called Causal Inference Paradigm is based on the perfection-seeking standard as a limited approach to approximating results from randomized experiments.

I have pointed out that there exists another possible standard, the Useful Approximation Standard. For example, imagine we are characterizing the effects of a wildfire on forest cover (Figure 4.6). Does any reasonable person claim that we can't know that forest cover is reduced by the fire? This standard has been dismissed by advocates for the statistical paradigm multiple times. They need to zoom out, I would argue.

The Current Limits of the Statistical Causal Inference Paradigm

Figure 6.4. There is no dispute from me that counterfactual causal inference has an important place in causal methodology. This is why I promote a Multi-Evidence Paradigm. The counterfactual approach is designed for situations where one wishes to estimate the magnitude of effect of some treatment or exposure, which is common. Adherence to the perfection-seeking standard produces many limitations that scientists should be aware of. These include:

(1) restrictions on data requirements,

(2) restrictions on sanctioned analyses*,

(3) an inability to contribute to building transportable causal knowledge.

There are currently efforts being made to go beyond these limitations, though I believe they are inherent to the paradigm.

Adoption of the Multi-Evidence paradigm can lead to substantial opportunities for "Integrated Causal Analyses" (e.g., Fig. 0.1). However, this will require subject-matter specialists play a central role in deciding admissable evidence, evidential processes, requirements and standards, and determination (as recommended by the National Academies Report.

*Not possible to study multiple causes:

Ferraro and Hanauer 2014 state,

“Tackling hidden bias in a study that aims to estimate the effects of a single cause is difficult. Tackling such bias in a study that aims to estimate the effects of multiple causes on a single Y (the causes of an effect) is, in our opinion, beyond the reach of current theory and data. For example, a study that purports to estimate the causes (determinants) of deforestation would be better viewed as a study that generates hypotheses for future studies of individual causes, rather than a study that credibly estimates causal relationships between myriad variables and deforestation.”

*These severely limitations are shown to not be inherent to mechanistic causal investigations.

(Grace et al. 2025b "Causal interpretations can be based on mechanistic knowledge." )

* Failure to produce transportable causal knowledge:

The literature on statistical causal inferernce recognizes that counterfactual causal effects are characterizations of data sets and have no independent meaning. Page 3 clearly shows that transportable knowledge requires knowledge of the mechanistic structures and processes.

My frustrations with the exaggerated and misleading literature on statistical causal infernce has led me to refer to that paradigm as, "A Bandwagon to Nowhere".

The methodology does not even recognize the concept or existence of causal knowledge. What are we supposed to be doing then?

Once again, the main thing you need to keep in mind is that the current approach, which essentially is the study of causation using correlations, is Oversimplified.